MLOps Through Human Anatomy — Patterns, Preparation, and a Sharpened Saw

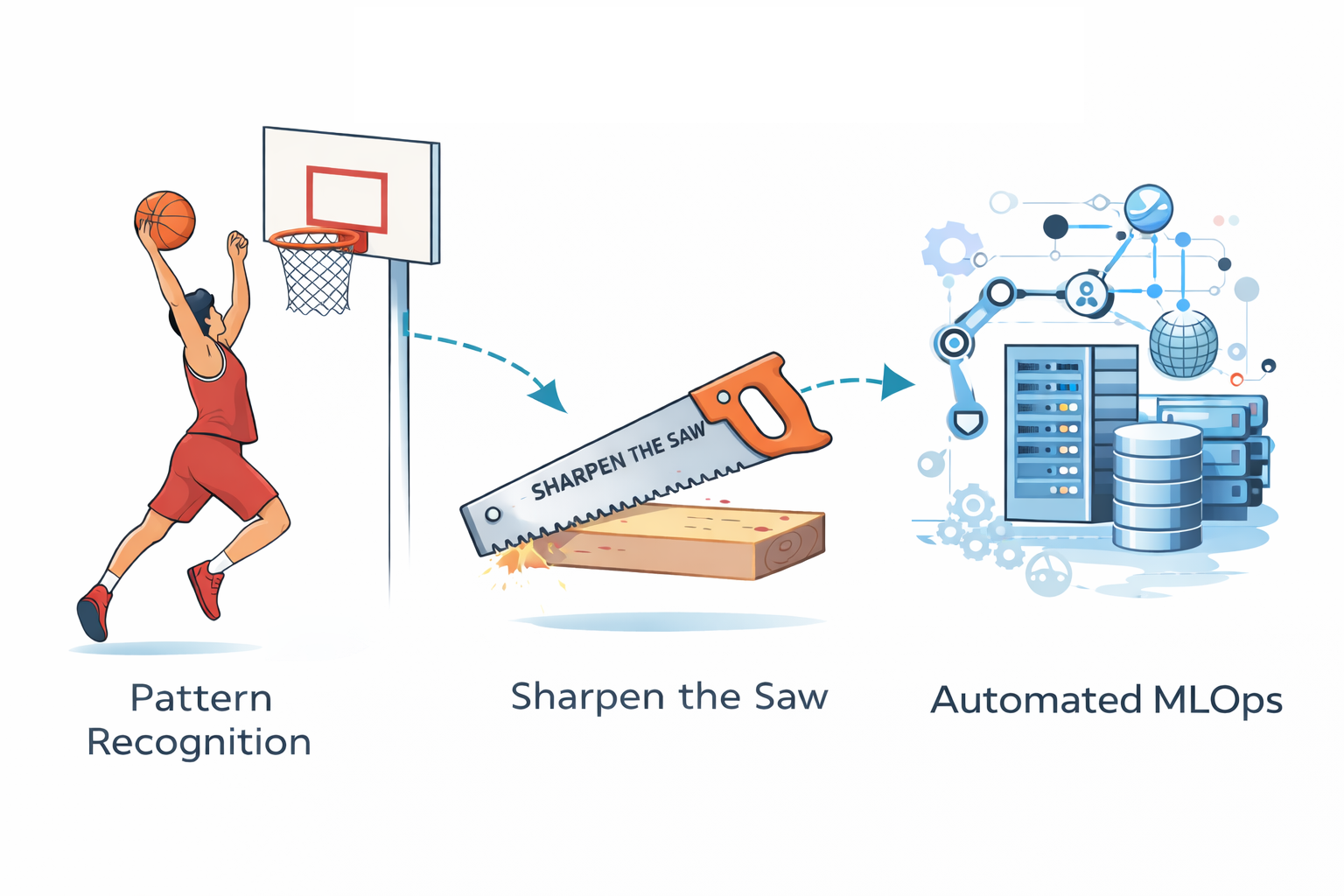

When people first encounter Machine Learning Operations (MLOps), it often feels like walking into a crowded basketball court for the first time. There are tools everywhere — cloud platforms, containers, monitoring systems, APIs, pipelines, experiment tracking — all moving at once. At first glance, it looks chaotic. Nothing seems connected, and the sheer number of technologies can make the whole field feel overwhelming.

But something interesting happens once you stay long enough. Patterns begin to appear.

This realization reminds me of an idea from The 7 Habits of Highly Effective People by Stephen R. Covey, where Covey describes the habit of “Sharpening the Saw.” The idea is simple: if you take time to prepare your tools properly before cutting, the work becomes dramatically easier. A sharpened saw cuts smoothly and efficiently, while a dull saw struggles no matter how hard you push.

MLOps works in much the same way. When the system is designed carefully — when every component is sharpened and connected correctly — automation begins to run almost effortlessly. Instead of constant manual effort, the system becomes capable of running continuously and reliably.

One way to understand this ecosystem is to imagine MLOps as a living human body.

The Brain: Where the Model Learns

In a human body, the brain is responsible for learning, reasoning, and making decisions. Every experience strengthens certain neural pathways and gradually improves the brain’s ability to respond to the world.

Machine learning models behave similarly. During training, algorithms analyze data and adjust internal parameters in order to detect patterns. This process allows the model to improve predictions over time. Frameworks such as TensorFlow, PyTorch, and Scikit-learn are commonly used to build and train these models.

In this analogy, the trained model represents the brain of the system. It is the component that actually understands patterns in the data and produces intelligent outputs.

Memory: Data and Knowledge Storage

Human intelligence would be impossible without memory. Our brains store experiences, facts, and learned skills so they can be recalled later.

MLOps systems also require a form of memory. Datasets, trained models, experiment results, and logs must all be stored reliably. Cloud storage platforms such as Amazon Web Services, Google Cloud, and Microsoft Azure provide scalable environments where this information can be preserved.

This storage layer acts as the system’s memory. Without it, models could not be retrained, experiments could not be reproduced, and learning would effectively start from zero every time.

The Nervous System: APIs and Model Serving

In the human body, the nervous system carries signals between the brain and the rest of the body. When the brain decides to move a hand, the nervous system transmits that signal instantly.

In MLOps, APIs and model-serving infrastructure play the same role. Once a model is trained, it must be made accessible so that applications can use it. Developers typically expose the model through an API endpoint, allowing external systems to send inputs and receive predictions.

Frameworks such as FastAPI or Flask allow engineers to build these interfaces. The model remains the brain, while the API layer functions as the nervous system connecting intelligence to the outside world.

Muscles: Compute Power

Muscles are responsible for executing the actions directed by the brain. They perform the physical work required for movement and strength.

In the MLOps ecosystem, compute resources fill this role. Training machine learning models and serving predictions requires significant computational power. CPUs, GPUs, and distributed clusters provide the energy needed to run these workloads.

Cloud providers such as Amazon Web Services, Microsoft Azure, and Google Cloud supply scalable infrastructure that allows organizations to increase compute capacity whenever necessary. Just as stronger muscles enable greater physical performance, stronger compute resources enable more complex machine learning capabilities.

Organs and Internal Structure: Containers and Infrastructure

The human body depends on organs working together within a coordinated structure. Each organ performs a specific role while supporting the stability of the whole organism.

In MLOps systems, infrastructure tools provide this internal structure. Technologies such as Docker and Kubernetes are widely used to manage machine learning applications. Docker allows developers to package models with all their dependencies so they run consistently across different environments. Kubernetes orchestrates these containers, ensuring that services remain available and scalable even when traffic increases.

These tools act like the internal organs of the system, coordinating different processes so the overall organism remains stable.

Doctors and Health Monitoring

Even the healthiest body requires monitoring. Doctors check vital signs such as heart rate and blood pressure to detect problems before they become serious.

Machine learning systems require similar oversight. Monitoring tools such as Prometheus and Grafana track system performance, latency, and model behavior. They provide dashboards and alerts that help engineers identify anomalies or degradation in performance.

In this analogy, these tools function as doctors observing the health of the system and ensuring that it continues to operate safely.

The Research Diary: Experiment Tracking

Scientists have long relied on detailed lab notebooks to record experiments. These records allow researchers to understand what worked, what failed, and how improvements were achieved.

Machine learning development requires the same discipline. Tools such as MLflow allow teams to track experiments, record hyperparameters, store metrics, and manage model versions. This historical record helps engineers reproduce experiments and compare results across different iterations.

Just as a researcher’s notebook preserves scientific progress, experiment tracking preserves the learning journey of a machine learning system.

Daily Routine: Workflow Automation

Human life functions through routines. Activities such as eating, sleeping, and working occur in predictable sequences that keep the body operating efficiently.

Machine learning pipelines rely on similar routines. Data must be collected, processed, and used to train models. Evaluations must run, models must be deployed, and updates must occur periodically.

Workflow orchestration systems such as Apache Airflow and Prefect coordinate these tasks. They manage complex pipelines and ensure that every step runs in the correct order. Once these workflows are configured correctly, much of the process can run automatically.

This is where the principle of “sharpening the saw” becomes especially relevant. When pipelines, infrastructure, and monitoring systems are prepared carefully in advance, automation begins to function almost continuously — just like a sharpened saw that cuts smoothly without excessive effort.

The Immune System: Continuous Integration and Deployment

The immune system protects the human body from threats. It identifies harmful intruders and responds quickly to maintain stability.

In MLOps systems, Continuous Integration and Continuous Deployment (CI/CD) pipelines serve a similar function. Tools such as GitHub Actions and Jenkins automatically test new code and deploy updates safely.

CI/CD pipelines prevent unstable systems from reaching production environments. Every change must pass through automated testing and validation before it becomes part of the live system.

Patterns Everywhere

One of the most powerful insights in learning — whether in machine learning, sports, or language acquisition — is the idea that everything becomes understandable through patterns.

At first, systems appear chaotic because the brain cannot yet see how components relate to each other. Over time, repeated exposure reveals structure. Separate parts begin to connect, and the system becomes interpretable.

In my own experience, this process feels very similar to learning a new environment. The first time you enter a crowded basketball court, everything looks like noise: dozens of players, overlapping games, constant movement. But once you spend time there, patterns begin to appear. You notice who plays together, how games form, how players move, and how possessions flow. What once seemed chaotic gradually becomes structured.

Learning MLOps follows a similar journey. At first, the ecosystem appears overwhelming. But once you understand each component individually — training, storage, APIs, monitoring, infrastructure, automation — the system begins to reveal a coherent structure.

When every pattern works independently and connects correctly with the others, the entire system functions smoothly.

A Living System

In the end, MLOps is not just about deploying models. It is about building a living system around them.

The model serves as the brain. Data storage becomes memory. APIs form the nervous system. Compute resources act as muscles. Infrastructure tools provide the internal organs that coordinate the system. Monitoring tools function like doctors, experiment tracking records the system’s learning history, workflow orchestration establishes daily routines, and CI/CD pipelines protect the system like an immune response.

When these elements are properly prepared and connected — when the saw has been sharpened — automation becomes natural. The system can learn, adapt, and operate continuously.

And just like learning a new sport, language, or instrument, once the patterns become visible, what once felt chaotic begins to feel almost effortless.